3D mapping technology

OFH has extensive expertise in 3D mapping, distance measurement, and have worked on LIDAR, stereo imaging, time of flight, computational photography, light coding, structured illumination, and many more methods. Our clients have sold millions of units and are global leaders in robotic vision.

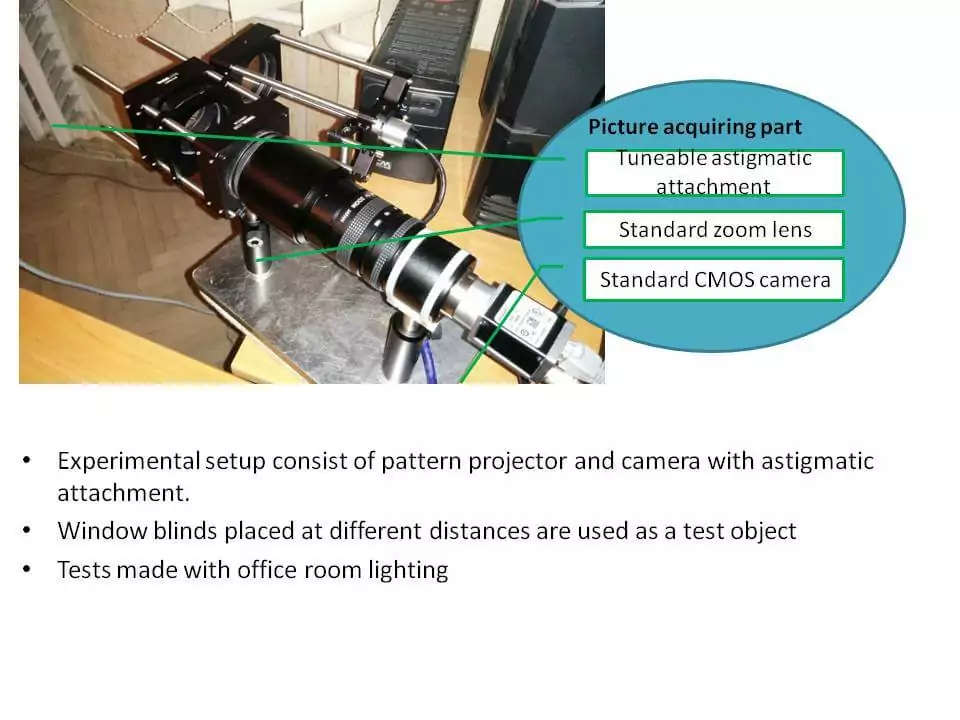

Below we describe a new approach developed by OFH and described in US Application No. 14/256,085. This method uses a pattern projector and an astigmatic lens placed in front of an image sensor to generate a depth map.

Technology Overview

Circular spots are projected ( via laser with lenslet array or grating)

Astigmatic spots are collected at the image sensor

Image is decoded using various methods (Hough transform, boundary definition)

Distance determined by the ratio between long and short axis of spot

Key Benefits

Method is not sensitive to multi-path interference

Less sensitive to changes in object reflection/scattering, or changes in ambient light within a scene. This is because the method relies on changes in spot shape (not spot intensity) to determine distance

For certain applications, the system may be used without projected pattern

System can be very small; in fact, the performance is better when the projection axis and collection path are next to each other

Concept

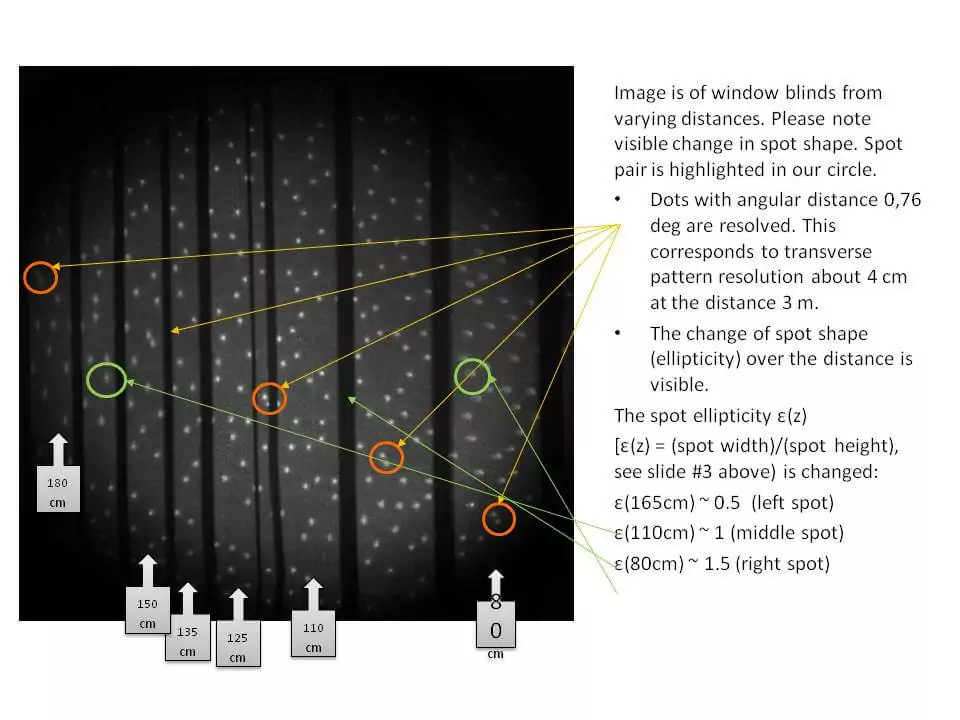

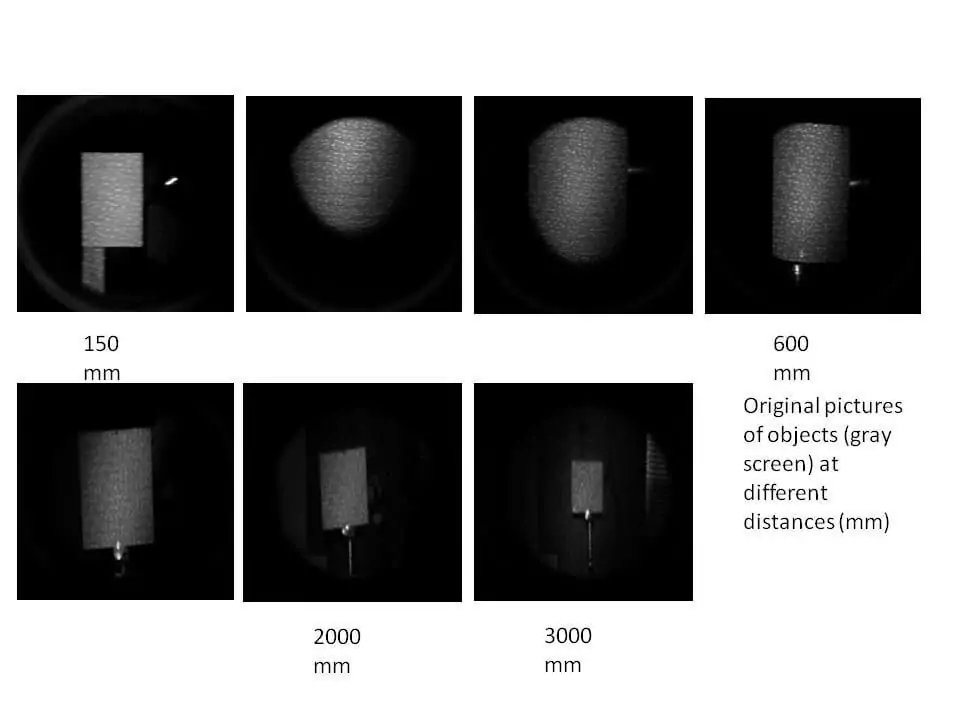

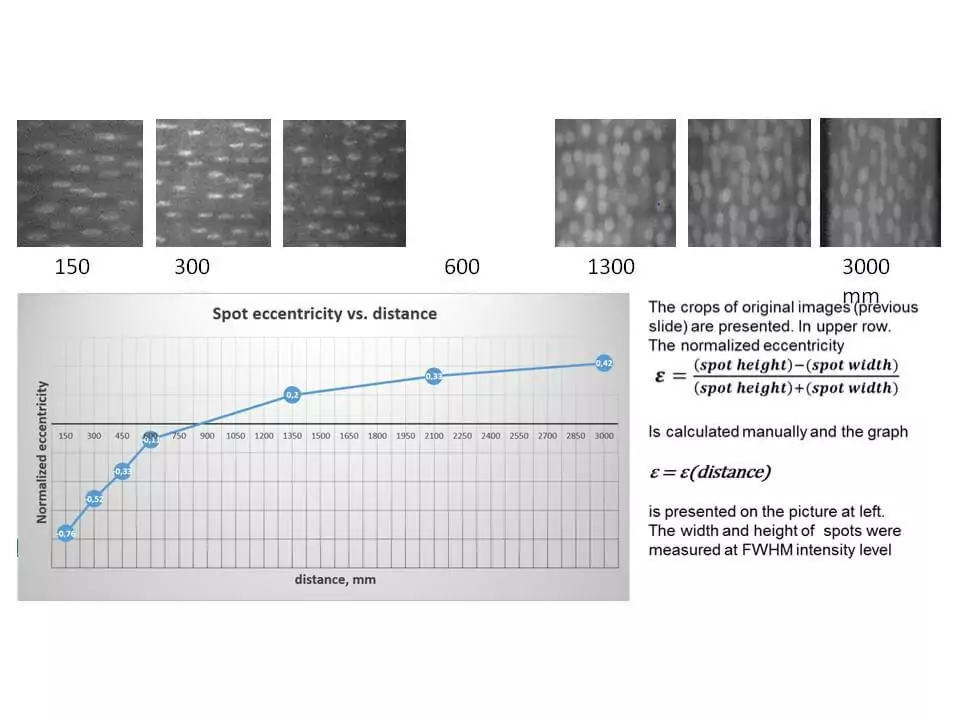

By adding astigmatism to the image collecting objective lens, the shape of point spread function (PSF) becomes dependent on the distance to the object: The eccentricity ε of elliptical PSF varies with the distance to the object.

Multipath error

Multi-path error is a characteristic feature of TOF-based rangefinders and is caused by unwanted reflections from object surfaces placed at an angle.

Our proposed solution belongs to the “Depth from Defocus” class of range finding methods which are based on the picture analysis, not from analysis of light phase shifts used in TOF systems.

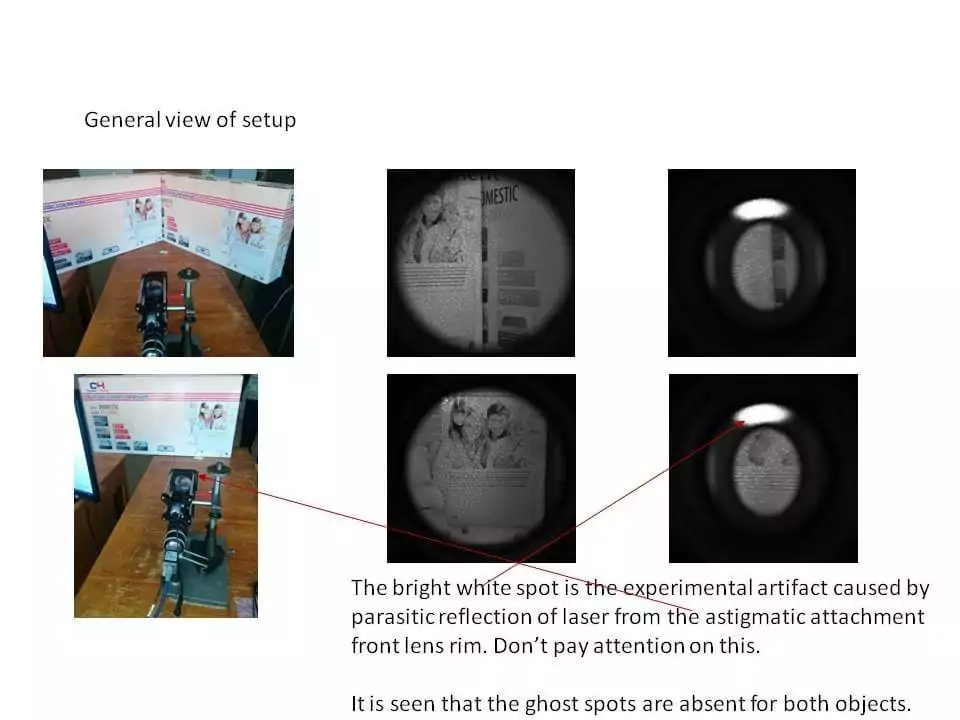

The multi-pass error is most perceptible when the angle between surfaces is 90° because the returning light goes exactly in the opposite direction. We have tested this worst case by means of 2 surfaces ( the glossy paper coated carton) which are placed at the 90° angle ( please, see the next slide, upper row of pictures).

The pictures obtained with the same flat object surface but perpendicular to the system optical axis are presented for comparison (bottom row).

Accuracy and range

Astigmatic attachment can be optimized based on the required distance measurement range, distance measurement (longitudinal) accuracy, working wavelength, and projected pattern geometry.

The distance map transversal resolution depends on the number of spots projected.

Resolution, accuracy, and repeatability is influenced by sensor quality and imaging lens quality.

Alternative method- Passive layout

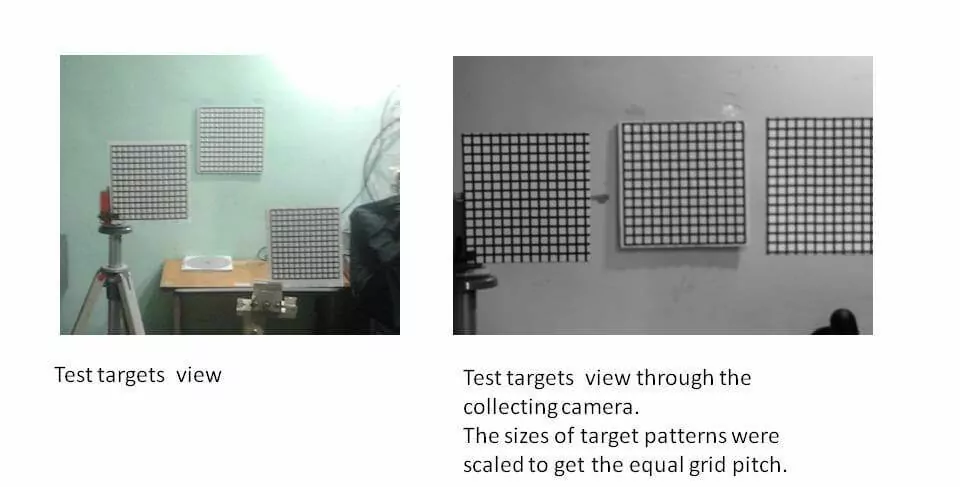

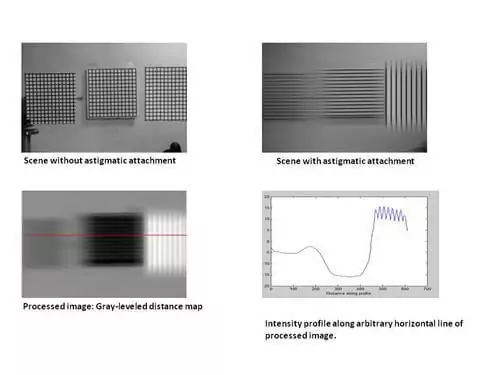

The passive layout means that the system does not use structured illumination. In the image below, test targets have a grid pattern to better show the astigmatic effect. The distances from the camera to the targets are left: 1.2 m, middle: 2.70 m, right: 0,50 m.

Astigmatic effect-passive layout

Advantages:

Low sensitivity to the illumination variations over the entire scene.

Low sensitivity to the different colors of the scene.

Easy adaptability to off-the-shelf lens and/or specific requirements regarding range of distances to be recognized.

Disadvantages

This principle cannot resolve the so-called “White Wall” problem: object to be measured needs surface with optically resolvable features

These disadvantages of passive layout may be either reduced by updating picture processing algorithms or practically eliminated by adding a special structured pattern projector, i.e. transforming this Passive system into and Active one.

Get in Touch

Fill out the form below or contact us directly.

Call Us

To discuss your project

781-583-7810

Email Us

We are ready to assist